10/05/2014

SMTP over Hidden Services with postfix

More and more privacy experts are nowdays calling people to move away from the email service provider giants (gmail, yahoo!, microsoft, etc) and are urging people to set up their own email services, to “decentralize”. This brings up many many other issues though, and one of which is that if only a small group people use a certain email server, even if they use TLS, it’s relatively easy for someone passively monitoring (email) traffic to correlate who (from some server) is communicating with whom (from another server). Even if the connection and the content is protected by TLS and GPG respectively, some people might feel uncomfortable if a third party knew that they are actually communicating (well these people better not use email, but let’s not get carried away).

This post is about sending SMTP traffic between two servers on the Internet over Tor, that is without someone being able to easily see who is sending what to whom. IMHO, it can be helpful in some situations to certain groups of people.

There are numerous posts on the Internet about how you can Torify all the SMTP connections of a postfix server, the problem with this approach is that most exit nodes are blacklisted by RBLs so it’s very probable that the emails sent will either not reach their target or will get marked as spam. Another approach is to create hidden services and make users send emails to each other at their hidden service domains, eg username@a2i4gzo2bmv9as3avx.onion. This is quite uncomfortable for users and it can never get adopted.

There is yet another approach though, the communication could happen over Tor hidden services that real domains are mapped to.

HOWTO

Both sides need to run a Tor client:

aptitude install tor torsocks

The setup is the following, the postmaster on the receiving side sets up a Tor Hidden Service for their SMTP service (receiver). This is easily done in his server (server-A) with the following line in the torrc:

HiddenServicePort 25 25. Let’s call this HiddenService-A (abcdefghijklmn12.onion). He then needs to notify other postmasters of this hidden service.

The postmaster on the sending side (server-B) needs to create 2 things, a torified SMTP service (sender) for postfix and a transport map that will redirect emails sent to domains of server-A to HiddenService-A.

Steps needed to be executed on server-B:

1. Create /usr/lib/postfix/smtp_tor with the following content:

#!/bin/sh torsocks /usr/lib/postfix/smtp $@

2. Make it executable

chmod +x /usr/lib/postfix/smtp_tor

3. Edit /etc/postfix/master.cf and add a new service entry

smtptor unix - - - - - smtp_tor

For Debian Stretch and/or for postfix 2.11+ this should be:

smtptor unix - - - - - smtp_tor -o smtp_dns_support_level=disabled

4. If you don’t already have a transport map file, create /etc/postfix/transport with content (otherwise just add the following to your transport maps file):

domain-a.net smtptor:[abcdefghijklmn12.onion] domain-b.com smtptor:[bbbcccdddeeeadas.onion]

5. if you don’t already have a transport map file edit /etc/postfix/main.cf and add the following:

transport_maps = hash:/etc/postfix/transport

6. run the following:

postmap /etc/postfix/transport && service postfix reload

7. If you’re running torsocks version 2 you need to set AllowInbound 1 in /etc/tor/torsocks.conf. If you’re using torsocks version 1,you shouldn’t, no changes are necessary.

Conclusion

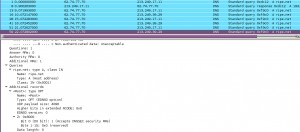

Well that’s about it, now every email sent from a user of server-B to username@domain-a.net will actually get sent over Tor to server-A on its HiddenService. Since HiddenServices are usually mapped on 127.0.0.1, it will bypass the usual sender restrictions. Depending on the setup of the receiver it might even evade spam detection software, so beware…If both postmasters follow the above steps then all emails sent from users of server-A to users of server-B and vice versa will be sent anonymously over Tor.

There is nothing really new in this post, but I couldn’t find any other posts describing such a setup. Since it requires both sides to actually do something for things to work, I don’t think it can ever be used widely, but it’s still yet another way to take advantage of Tor and Hidden Services.

!Open Relaying

When you setup a tor hidden service to accept connections to your SMTP server, you need to be careful that you aren’t opening your mail server up to be an open relay on the tor network. You need to very carefully inspect your configuration to see if you are allowing 127.0.0.1 connections to relay mail, and if you are, there are a couple ways to stop it.

You can tell if you are allowing 127.0.0.1 to relay mail if you have something like this in your postfix configuration by looking at the smtpd_recipient_restrictions and seeing if you have permit_mynetworks, and your mynetworks variable includes 127.0.0.1/8 (default). The tor hidden service will connect via 127.0.0.1, so if you allow that to send without authentication, you are an open relay on the tor network, and you don’t want that…

Three ways of dealing with this.

1. Remove remove 127.0.0.1 from mynetworks and use port 25/587 as usual.

2. Create a new secondary transport that has a different set of restrictions. Copy the restrictions from main.cf and remove ‘permit_mynetworks’ from them

/etc/postfix/master.cf

2525 inet n - - - - smtpd -o smtpd_recipient_restrictions=XXXXXXX -o smtpd_sender_restrictions=YYYYYY -o smtpd_helo_restrictions=ZZZZ 2587 inet n - - - - smtpd -o smtpd_enforce_tls=yes -o smtpd_tls_security_level=encrypt -o smtpd_sasl_auth_enable=yes -o smtpd_client_restrictions=permit_sasl_authenticated,reject -o smtpd_sender_restrictions= -o smtpd_recipient_restrictions=XXXXXXX -o smtpd_sender_restrictions=YYYYYY -o smtpd_helo_restrictions=XXXXX

Then edit your /etc/tor/torrc

HiddenServiceDir /var/lib/tor/smtp_onion HiddenServicePort 25 2525 HiddenServicePort 587 2587

3. If your server is not used by other servers to relay email, then you can use the newer postfix variable that was designed for restricting relays smtpd_relay_restrictions (remember NOT to use permit_mynetworks there) to allow emails to be “relayed” by the onion service:

/etc/postfix/main.cf

smtpd_relay_restrictions = permit_sasl_authenticated, reject_unauth_destination smtpd_recipient_restrictions = reject_unknown_recipient_domain, check_recipient_access hash:$checks_dir/recipient_access, permit_sasl_authenticated, permit_mynetworks, permit

Concerns

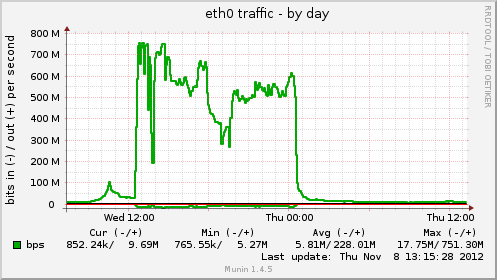

Can hidden services scale to support hundreds or thousands of connections e.g. from a mailing list ? who knows…

This type of setup needs the help of big fishes (large independent email providers like Riseup) to protect the small fishes (your own email server). So a new problem arises, bootstrapping and I’m not really sure this problem has any elegant solution. The more servers use this setup though, the more useful it becomes against passive adversaries trying to correlate who communicates with whom.

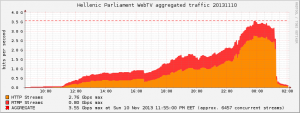

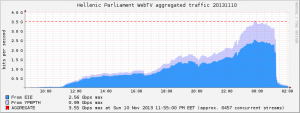

The above setup works better when there are more than one hidden services running on the receiving side so a passive adversary won’t really know that the incoming traffic is SMTP, eg when you also run a (busy) HTTP server as a hidden service at the same machine.

Hey, where did MX record lookup go ?

Trying it

If anyone wants to try it, you can send me an email using voidgrz25evgseyc.onion as the Hidden SMTP Service (in the transport map).

Links:

http://www.postfix.org/master.5.html

http://www.groovy.net/ww/2012/01/torfixbis

ehloonion/onionmx github repository

*Update 01/02/2015 Added information about !Open Relaying and torsocks version 2 configuration*

*Update 11/10/2016 Updated information about !Open Relaying*

*Update 14/06/2018 Added link to ehloonion/onionmx*

Filed by kargig at 22:14 under Encryption,Internet,Linux,Networking,Privacy

Filed by kargig at 22:14 under Encryption,Internet,Linux,Networking,Privacy

Tags: email, hidden service, postfix, Privacy, smtp, tor

6 Comments | 55,637 views

6 Comments | 55,637 views